Zuckerberg also showed how the neural interface can be used to compose messages (on WhatsApp, Messenger, Instagram, or via a connected phone’s messaging apps) by following your mimed “handwriting” across a flat surface. Though this feature reportedly won’t be available at launch, Zuckerberg said he had gotten up to “about 30 words per minute” in this silent input mode.

The most impressive part of Zuckerberg’s on-stage demo that will be available at launch was probably a “live caption” feature that automatically types out the words your partner is saying in real-time. The feature reportedly filters out background noise to focus on captioning just the person you’re looking at, too.

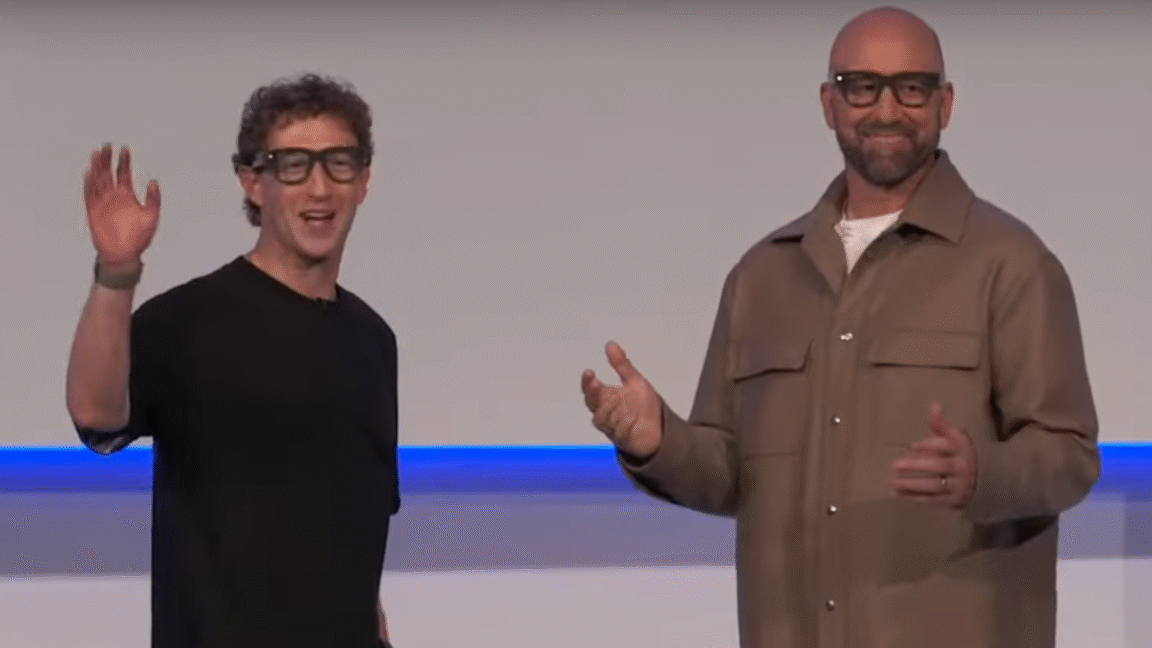

A Meta video demos how live captioning works on the Ray-Ban Display (though the field-of-view on the actual glasses is likely much more limited).

Credit:

Meta

Beyond those “gee whiz” kinds of features, the Meta Ray-Ban Display can basically mirror a small subset of your smartphone’s apps on its floating display. Being able to get turn-by-turn directions or see recipe steps on the glasses without having to glance down at a phone feels like genuinely useful new interaction modes. Using the glasses display as a viewfinder to line up a photo or video (using the built-in 12 megapixel, 3x zoom camera) also seems like an improvement over previous display-free smartglasses.

But accessing basic apps like weather, reminders, calendar, and emails on your tiny glasses display strikes us as probably less convenient than just glancing at your phone. And hosting video calls via the glasses by necessity forces your partner to see what you’re seeing via the outward-facing camera, rather than seeing your actual face.

Meta also showed off some pie-in-the-sky video about how future “Agentic AI” integration would be able to automatically make suggestions and note follow-up tasks based on what you see and hear while wearing the glasses. For now, though, the device represents what Zuckerberg called “the next chapter in the exciting story of the future of computing,” which should serve to take focus away from the failed VR-based metaverse that was the company’s last “future of computing.”